Live

All manifold CLOUD services are performed with guaranteed sub-frame latency just as live event operators are used to.

Service Focus

90%

COTS FPGA accelerators use 90% less power than comparable CPU based servers.

If you're serious about green gas emissions you can't find a more efficient solution!

Automatic

1.6 Tbps

Utilizing the latest generation COTS FPGA Programmable Acceleration Cards offers up to 1.6Tbps of processing per RU. That's enough to process up to 1024 HD signals!

And because it's networked if you need more just add another server!

Unlimited

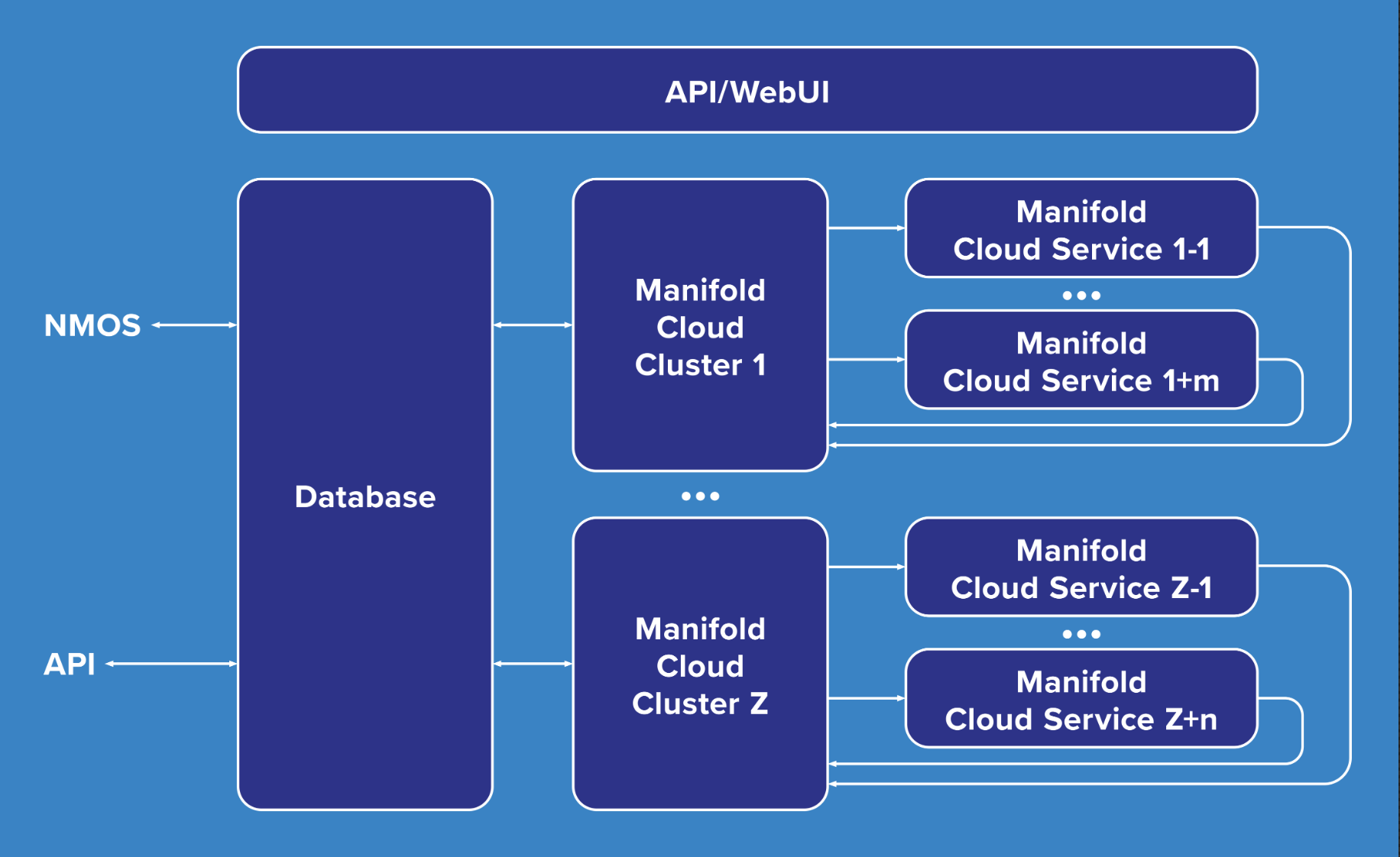

manifold CLOUD is built upon the time multiplexing of services using FPGA High Bandwidth Memory (HBM).

In difference to all other broadcast products on the market manifold CLOUD does not have a fixed amount of senders and receivers.

The only limitation is the amount of bandwidth allocated to the system!